Understanding Sensitive Personal Data and Its Protection

The global average cost of a data breach fell 9 percent to 4.44 million dollars in 2025, even as the United States hit a record 10.22 million dollars per breach due to steeper fines and rising detection costs. Here is the thing: that split reality tells the story of sensitive personal data in 2025, where AI accelerates both defense and offense while regulators harden penalties and consumers grow less tolerant of sloppy data practices.

Against that backdrop, Apple is pushing its Private Cloud Compute design to claim private-by-default AI processing, Microsoft is reworking Recall after a privacy backlash, and European regulators have already tallied billions in penalties this year, which directly affects investors, consumers, and employees who live with the operational and reputational fallout.

After peaking on the hype cycle, sensitive personal data protection is shifting from slogans to systems, with Apple’s Private Cloud Compute promising inspectable, stateless, encrypted AI processing, Microsoft moving Recall to opt-in with encryption and tighter controls, and regulators signaling that compliance must be substantive, not performative.

The result is a new playbook that prioritizes data minimization, on-device processing, verifiable privacy architectures, and continuous AI governance so organizations can reduce breach costs, preserve trust, and avoid the fines that now make the United States the most expensive place to get breached.

Key Data

IBM reports the global average breach cost fell to 4.44 million dollars in 2025, while the United States rose to 10.22 million dollars, and 97 percent of AI-related breaches lacked proper AI access controls.

GDPR fines surpassed 3 billion euros in the first half of 2025 alone, highlighting regulator expectations that go beyond check-the-box compliance.

Seventy-one percent of adults are concerned about government data use, reflecting broad public unease that amplifies brand and regulatory risk when sensitive data is exposed.

Why This Data Matters

Falling global averages can mask specific risk premiums where fines, detection, and escalation costs rise, which means sensitive personal data now sits at the center of a dual pressure system, the financial imperative to contain faster and the legal imperative to prove privacy by design. When most AI-related breaches stem from missing access controls, and enforcement totals spike, organizations need verifiable architectures and documented governance for every system that touches personal data.

Understanding Sensitive Personal Data And Its Protection: Step-by-Step Guide

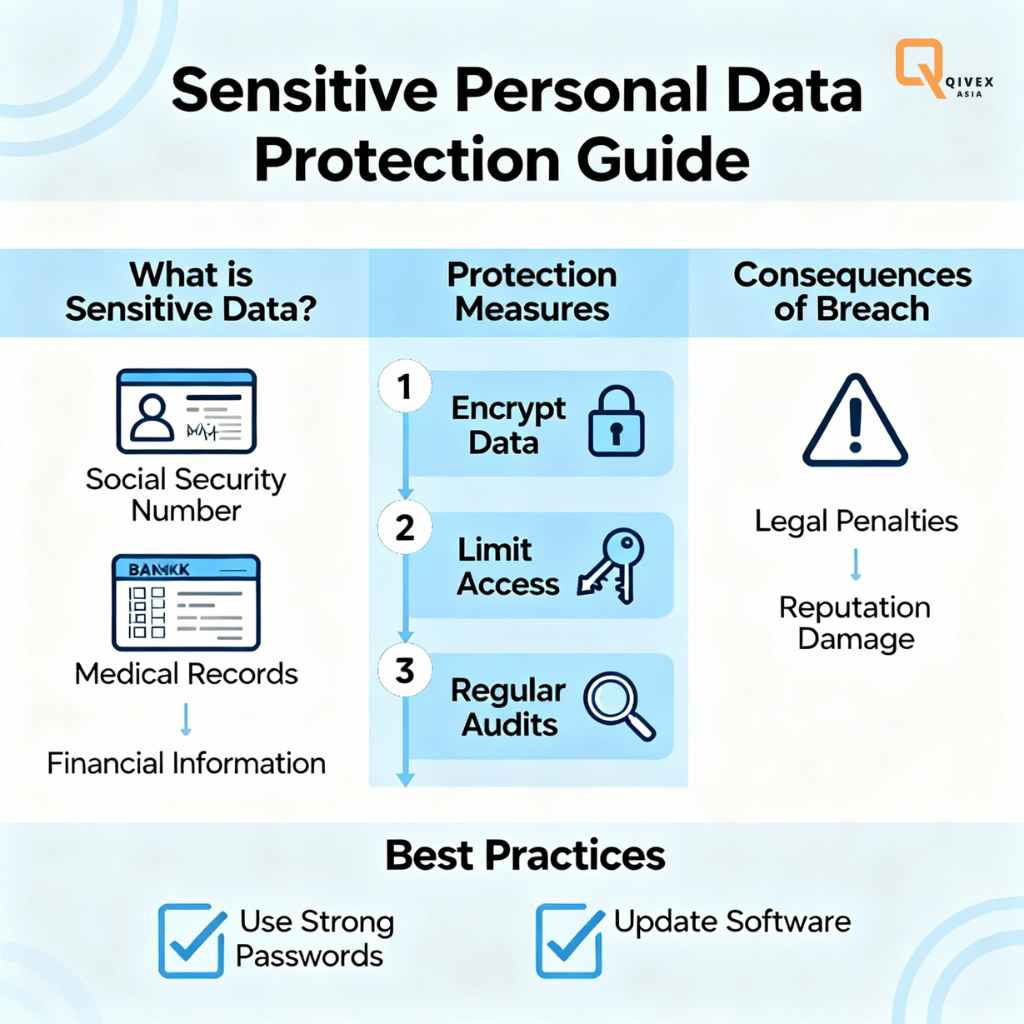

Map and Minimize What Is Collected

Sensitive personal data protection starts with a live inventory of what is collected, where it resides, who accesses it, and why it is retained, because the IBM breach lifecycle data shows that multi-environment sprawl increases complexity, cycle time, and cost. Translate that inventory into a minimization policy that cuts collection to the least data needed for the stated purpose and shifts processing to the edge or on-device when feasible, which aligns with privacy-preserving patterns now favored by both consumers and regulators.

When data must traverse environments, standardize formats and apply classification tags so security teams can accelerate detection, since faster identification and containment correlate with lower breach costs. This smells like housekeeping, but the organizations that reduce data surface area and centralize visibility are the ones most likely to keep breach lifecycles under control and fines in check.

Ground Rules and Consent, Documented and Testable

Move beyond banner fatigue by defining lawful bases and explicit, revocable consent for each sensitive data use case, then operationalize that in workflows and logs that satisfy auditors and DPOs under real scrutiny. Sources say that formal policies without enforcement do not move the needle, so bind consent states to identity, timestamp each change, and ensure downstream services respect revocations, which is the type of substantive practice regulators increasingly demand.

Bake in AI governance from the start, since 63 percent of breached organizations lack AI governance and 97 percent of AI-related breaches miss basic access controls, which is a gap that multiplies risk when personal data powers models. Finally, practice disclosures and crisis comms on paper and in drills, because public concern over data use is elevated, and brand damage compounds when explanations look improvised.

Secure the Data in Motion, at Rest, and in Use

Sensitive personal data demands encryption for storage and transport, strict key management, and least-privilege access with hardware-backed identity where possible, given how detection and escalation dominate cost profiles in the United States. Layer continuous monitoring over access paths, especially for high-value stores like customer PII and employee PII, because IBM’s analysis shows these records are frequent breach targets and drive expense.

Build incident response muscles to shorten mean-time-to-identify and mean-time-to-contain, since the 2025 cycle time reached a nine-year low at 241 days and every day saved reduces the risk of exfiltration and fines. Treat AI endpoints, plug-ins, and SaaS links as first-class attack surfaces, where supply chain compromise is the most common AI incident, and enforce consistent authentication and logging across that mesh.

On-Device First, Private Compute When Cloud Is Needed

Design for on-device AI processing whenever possible, then escalate to private-by-design cloud environments that are inspectable, stateless, and cryptographically constrained so sensitive data cannot persist. Apple’s Private Cloud Compute positions itself as a new frontier for private AI processing by routing only task-relevant data, using end-to-end protections, and making its architecture inspectable by independent researchers.

The point is not brand worship; it is verifiability and controls, which regulators and customers now expect when sensitive personal data interacts with large models and cloud inference. Here is the thing, when a provider can credibly claim no retention, limited scope, and external review, risk and breach blast radius shrink compared with generic cloud flows that leave data resident on shared severs.

Vendors, Data Brokers, and Cross-Border Flows

The fastest way to lose sensitive personal data is through a third-party weakness, which is why supply chain compromise ranks among the costliest and longest-to-resolve vectors and why enforcement actions are increasingly punishing weak vendor oversight. Treat processors, SaaS providers, AI plug-ins, and data enrichment sources as extensions of the security boundary, and demand attestation of access controls, encryption, retention, and sub-processor practices before any personal data flows.

Keep a register of all international transfers and the specific safeguards in place, because recent penalties make clear that contractual promises alone are insufficient without technical measures and risk assessments. Tie payment terms and renewals to privacy KPIs, because when 32 percent of breaches incur fines and U.S. costs are rising, vendor negligence becomes a bottom-line issue as much as a legal one.

People of Interest or Benefits

“Private Cloud Compute is a new frontier for AI privacy,” Apple’s security team wrote, framing PCC as a system for private AI processing that researchers can inspect, which, if delivered as designed, could reset expectations for how sensitive data interacts with cloud-scale compute. That transparency pitch contrasts with Microsoft’s Recall, which moved from default-on to opt-in, added encryption at rest, and layered Windows Hello protections after critics flagged unencrypted local data and broad capture of on-screen content.

Privacy teams benefit when major platforms normalize opt-in, encryption, and deletion controls, because those patterns are directly translatable to enterprise governance for sensitive personal data in mixed device and cloud environments. The practical benefit is faster audits and clearer evidence for regulators and customers who already signal that trust depends on strong, verifiable controls in the age of AI.

Looking Ahead

Analysts now predict privacy budgets will tilt toward AI governance, with Cisco’s 2025 study indicating that organizations plan to reallocate privacy spend into AI controls and that most see positive ROI from strong privacy investments, especially where trust drives conversion. Expect more architecture-level claims like PCC, more feature reversals like Recall’s opt-in, and more fines that target performative compliance, since the first half of 2025 already crossed three billion euros in penalties.

Consumers are paying attention, as concern about data use remains high, which means marketing upside for companies that can show real minimization and verifiable privacy, not just policy pages. In the short term, the organizations that compress breach lifecycles and harden AI access controls will keep costs closer to the global average and away from the U.S. premium that fines and escalation currently impose.

Closing Thought

If private-by-design AI becomes the standard and enforcement stays sharp, will enterprises rebuild enough trust to turn privacy from a compliance drag into a competitive moat, or will the next breach drag everyone back to the expensive side of the curve?

Appendix: Expanded Context and Practical Tie-Ins

Breach costs and AI gap: IBM’s 2025 report shows the first global cost decline in five years, yet the United States set a new high, while 97 percent of AI-related breaches lacked proper access controls, and 13 percent of organizations reported AI-involved breaches.

Microsoft Recall changes: Microsoft shifted Recall to opt-in, encrypted snapshots, selective monitoring, content filtering, and Windows Hello protection after criticism around unencrypted data and broad capture.

Apple PCC posture: Apple positions PCC as inspectable, stateless computation for AI tasks, sending only necessary data and opening materials for independent research to verify claims.

Regulatory intensity: GDPR fines have reached into the billions in 2025, with major cases reinforcing that technical safeguards and ongoing oversight are mandatory for data transfers and sensitive processing.

Trust dynamics: Cisco’s benchmark ties privacy to customer purchasing and ROI, while Pew data shows sustained concern about institutional data use, which increases reputational risk when incidents occur.